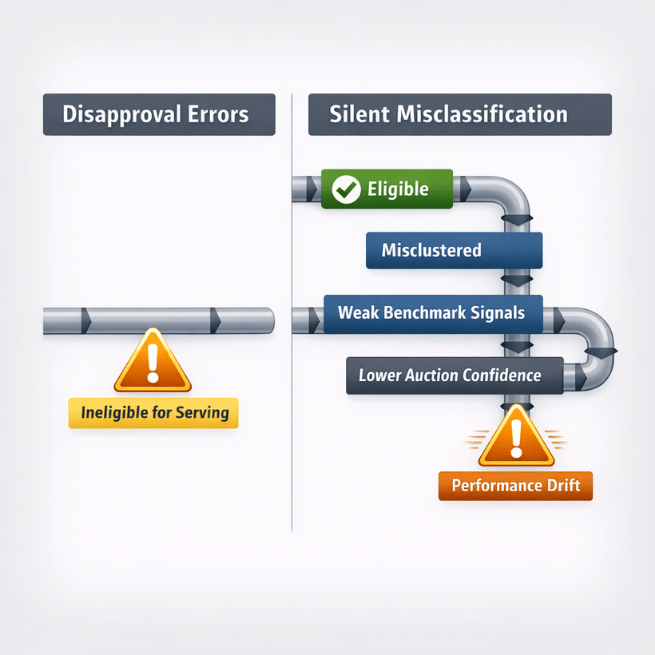

The biggest risk in feed management isn't disapproval. It's silent misclassification. Platforms can learn the wrong product signals long before anyone notices performance slipping.

If Shopping or Performance Max performance drifts without a clear cause, the problem usually isn't bidding. It's classification. Here's where this starts.

Feed Errors Don't Just Block Products — They Change How Platforms Interpret Your Catalog

Most teams monitor diagnostics for disapprovals. But warnings and 'approved with issues' statuses usually matter more.

Take a malformed GTIN as an example. The product remains eligible. But it can fail to match into Google's product knowledge graph cleanly, which weakens query-matching confidence, blocks price benchmarking against identical retailer listings, and reduces auction competitiveness in comparison surfaces. Nothing gets rejected. Performance just softens over time.

Eligibility isn't the problem. Classification is.

Once Smart Bidding has been training against a misclassified product context, fixing the feed doesn't immediately restore performance. Bid strategies don't recalibrate instantly when catalog inputs change — recovery follows the same lag pattern as any bid-strategy reset, typically the conversion window plus a learning period.

Campaign Learning Starts in the Catalog Layer Before Bidding Begins

Platforms decide what your product is before bidding even begins. They build product identity using inputs like GTIN, product_type, google_product_category, item_group_id, availability, and title structure.

Worth separating these conceptually. google_product_category is a fixed Google taxonomy that drives platform-side classification — it's not a segmentation lever you control. product_type, custom labels, and item_group_id are the levers. If those inputs are wrong, campaign learning starts wrong.

Example: high-margin SKUs missing segmentation labels get grouped with low-margin SKUs inside the same Performance Max asset group. Smart Bidding optimizes toward conversion value instead of contribution profit. No alerts. No warnings. Just the wrong optimization goal baked into campaign behavior.

This is where feed logic becomes campaign logic — and most teams never see it happening.

Invalid GTIN Formatting Removes Products From Identical-Item Matching

Teams expect GTIN errors to trigger disapprovals. More often, they break product matching reliability.

Typical scenario: supplier UPC includes spacing or hidden characters. Merchant Center accepts the item. Google can't normalize the identifier. The SKU doesn't match cleanly against the same product listed by other retailers.

Result: weaker price comparison context, narrower query matching confidence, and reduced competitiveness in surfaces that depend on identical-product matching. If identical SKUs show different impression share across retailers, this is often why.

GTIN determines whether Google can match your product to identical listings across the catalog graph. The fix isn't a fallback — it's upstream validation that fails loudly.

Example logic:

- Strip whitespace and non-numeric characters

- Validate supported GTIN lengths (8 / 12 / 13 / 14)

- Verify check digit per the GS1 validation guide

- Route failed records to a manual queue, not silent suppression

Important caveat on identifier_exists. Setting identifier_exists = no is not a safe normalization fallback. For branded products that should have a manufacturer GTIN, declaring no identifier is factually wrong, can cause disapproval, and still triggers limited performance warnings. Reserve identifier_exists = no for products that genuinely lack a GTIN — private label, custom goods, vintage, one-of-a-kind. Everything else needs a real GTIN or a fix at the source.

Variant Structure Errors Reduce Dynamic Delivery Without Triggering Diagnostics

Meta rarely surfaces variant-structure problems clearly. The behavior breaks quietly inside delivery and retargeting logic instead.

Typical pattern: missing shared item_group_id. Variants get treated as unrelated items. Meta loses the ability to suppress already-purchased variants in retargeting. Aggregated variant-level reporting in Commerce Manager breaks. Shop and Instagram Shopping lose the variant picker.

Worth being precise here. Meta doesn't rotate sizes and colors inside a single ad impression, it picks one variant per impression based on user signal. What item_group_id actually controls is whether Meta understands that the red shoe and the white shoe are the same product. Without that grouping, someone who bought the red pair keeps getting retargeted with the white pair, and your retargeting efficiency drops without a diagnostic flag.

Less obvious version: one variant missing images, another marked out of stock. Meta reduces delivery across the variant set because the catalog match rate drops. If Commerce Manager match rate slips without an obvious cause, this is usually why.

Fix this by enforcing variant completeness before export:

- Generate deterministic parent IDs from SKU structure

- Require image coverage across variant sets

- Normalize shared variant attributes (color, size, material)

- Suppress incomplete variant families where retargeting precision matters

Variant integrity directly affects delivery behavior and retargeting efficiency.

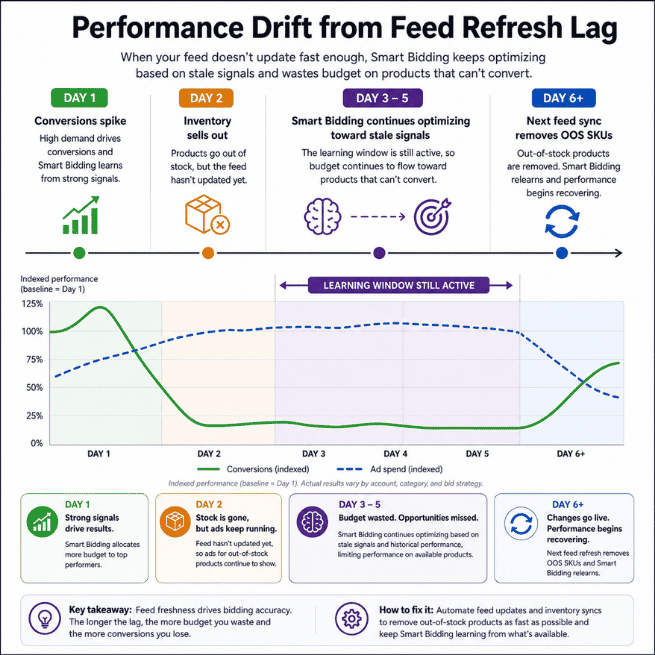

Availability Misclassification Trains Performance Max on Products That Won't Exist Tomorrow

This shows up in nearly every fast-moving catalog.

Inventory updates hourly. Feed pipeline lags. Performance Max accumulates conversion signal on high-converting SKUs. Products sell out mid-learning cycle. Conversion signals disappear. No disapprovals. ROAS destabilizes due to stale conversion mapping.

Smart Bidding is learning from products that won't exist tomorrow.

The lag isn't always at the feed level. Modern feed infrastructure supports near-real-time inventory updates via Content API and supplemental availability feeds. The bottleneck is usually upstream — the inventory system updates hourly but the export pipeline runs on a slower schedule, or stock thresholds aren't wired into the export logic at all.

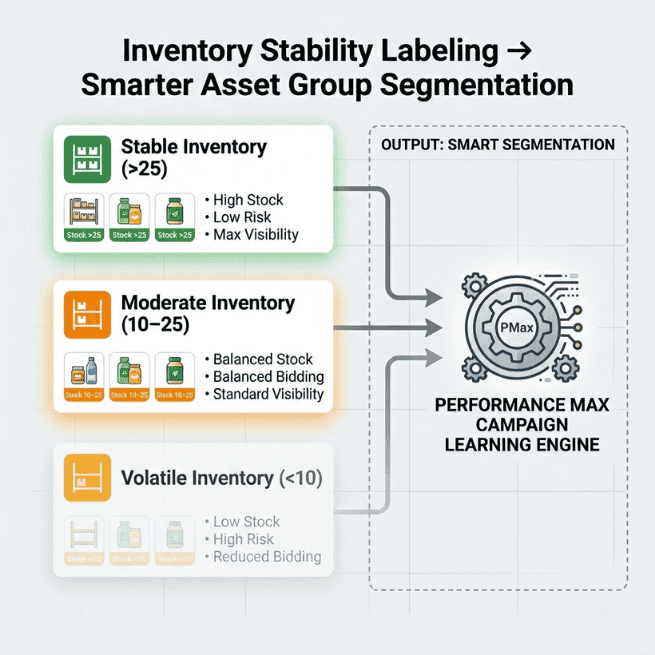

Fix this by labeling inventory stability before campaigns optimize against it.

Example rule:

custom_label_2 = inventory_stability

Logic:

- inventory > 25 units → stable

- inventory 10–25 → moderate

- inventory < 10 → volatile

Calibrate those thresholds against velocity, not as fixed values. For a fast-moving consumables catalog, 25 units is a few hours of inventory. For furniture, it's six months. Whatever thresholds you pick, segment asset groups by stability tier so Smart Bidding learns from durable inventory instead of temporary winners.

Product Type Controls Campaign Segmentation More Than Site Navigation

Most product_type values describe site navigation. Campaign segmentation needs business signals instead: margin tier, inventory depth, brand ownership, lifecycle stage.

If product_type doesn't reflect those signals, segmentation drifts immediately. Standard Shopping splits overlap. Performance Max asset groups compete internally. Reporting loses interpretability.

Rewrite taxonomy using rule-based transformations aligned with campaign structure. The slash delimiter is Google's documented hierarchy format for product_type, so the rewrite slots cleanly into listing filters and asset-group segmentation.

Example:

IF brand = private_label AND margin > 40% THEN product_type = priority/private_label/high_margin

Used inside listing filters and asset-group segmentation, this restores control over inventory grouping for bidding.

Missing Strategy Signals Force Platforms to Optimize for Revenue Instead of Profit

Platforms optimize using whatever signals exist in the feed. If the feed doesn't describe business priority, the platform defaults to predicted conversion value.

No margin labels, and optimization favors revenue instead of profit. No lifecycle labels, and clearance inventory competes with evergreen SKUs. No seasonality signals, and Smart Bidding overlearns temporary demand spikes.

These aren't diagnostics errors. They're signal gaps. Most feed tools export attributes. They don't control classification logic. So campaign behavior defaults to platform assumptions instead of business priorities.

Solve this by injecting strategy attributes directly into the export layer:

- Margin-tier labels

- Lifecycle stage tags

- Seasonal eligibility flags

- Promotion participation indicators

That changes what Smart Bidding optimizes for.

A Workflow That Prevents Signal Drift Before It Starts

Reactive feed fixes arrive after algorithmic drift occurs. Classification governance prevents the drift earlier.

Weekly:

- Review Merchant Center warnings affecting identifiers, availability mismatches, and grouping signals

- Scan Commerce Manager for variant-family delivery inconsistencies and match rate drops

- Compare inventory velocity vs. feed refresh timing on top-spend SKUs

Monthly:

- Rebuild

product_typesegmentation against margin tiers used in listing filters - Revalidate custom-label logic inside asset groups

- Use Listing Group reporting to audit which Item IDs accumulated conversion data during the last learning cycle

- Cross-reference channel performance reporting to see where misclassified SKUs are drawing spend

This turns feed management into signal control instead of diagnostics cleanup.

The Bottom Line

Format Compliance vs. Semantic Optimization. Feed formatting prevents disapprovals. Semantic data density controls algorithmic performance. Classification errors usually start long before diagnostics show anything wrong.

If performance degrades without diagnostic errors, the first place to look isn't bidding. It's feed classification.