Google loves Reddit. So does ChatGPT. In fact, Reddit is among the top 3 sources cited by AI responses.

Why? Because AI looks for "signals of truth" to validate your brand entity's quality before recommending it in responses.

To establish this truth, AI models require cross-referenced points from data you control and independent platform data. This moves the battleground from your website's architecture to your brand's presence in unstructured, third-party environments.

As highlighted by Neil Patel, Reddit operates as a foundational research layer that directly feeds AI search engines like ChatGPT, Perplexity, and Google's AI Overviews. Because users treat it as an unfiltered focus group for purchasing decisions rather than a direct checkout counter, Reddit generates high-intent, natural language training data.

For ecommerce operators, this means Reddit is not just a social channel; it is an open-source database.

Aligning your structured product data with these unstructured conversations allows you to provide the exact validation signals LLMs need to prioritize your inventory over competitors. But for these signals to be truly actionable, they must loop back and anchor that conversational trust into a fulfillment layer like Reddit DPA.

In this technical article, we look at the three layers that make up the Shoppable AI loop and we dissect the strategies sellers can leverage to build their own.

This Loop Has Layers

To successfully turn Reddit into a verified data source for LLMs, merchants should build a multi-layered data strategy built on Reddit and Google Merchant Center.

This requires combining unstructured community engagement with strict, API-driven catalog integrations.

Here’s a quick overview before we dive deeper:

Layer 1: Organic

This maps the conversational queries users actually type to the technical specifications of your product, bridging the gap between human intent and machine retrieval.

- Strategy: Subreddit Engagement

- Impact on AI Responses: Provides the "Natural Language" training data and sentiment analysis for the LLM.

Layer 2: Structured

Without this structured feed, the AI can only recommend your brand conceptually, lacking the exact endpoint required for a user to make a purchase.

- Strategy: Product Feed Optimization

- Impact on AI Responses: Provides DPA and GMC the "Hard Data" (SKUs, Specs, Pricing) that allows the AI to offer a specific recommendation.

Layer 3: DPA

Without an active DPA campaign, Reddit's system can match user intent to your catalog, but has no mechanism to act on it. The conversion moment passes.

- Strategy: Dynamic Product Ads + Conversions API

- Impact on Performance: Closes the loop between demonstrated intent and purchase, turning organic authority and structured catalog data into attributed revenue, with delivery signals that sharpen targeting over time.

That’s the quick overview. Now, let’s get into the details.

Layer 1: The Organic Consensus Protocol

To turn Reddit into a "Validation Layer" for your brand, merchants must move beyond traditional community management and into Semantic Consensus Building.

1. The "Entity Validation" AMA

In 2026, the value of an Ask Me Anything isn't the live traffic—it’s the permanent, factual transcript it creates for LLMs to scrape.

The Strategy:

Host technical AMAs featuring product designers or founders rather than marketing teams.

The Goal:

Use specific, non-marketing language to describe how products are made, their durability, and their specific use cases.

The Result:

When an LLM "reasons" through your brand, it finds high-authority, long-form text that confirms your brand is a legitimate, expert Entity.

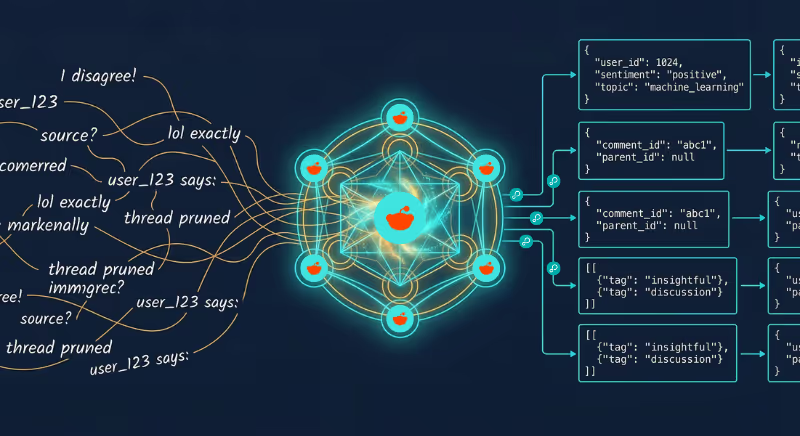

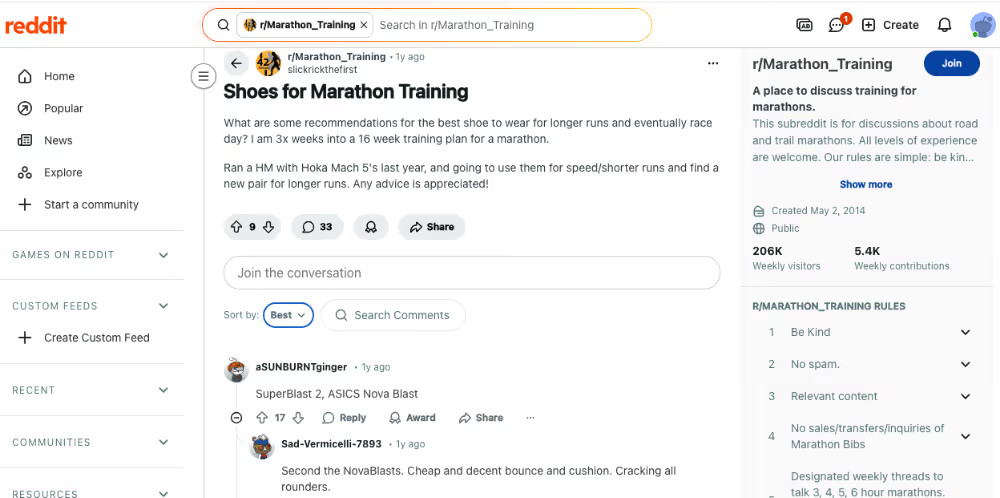

2. Seeding "Natural Language" Intent Signals

LLMs look for a bridge between what a user types (human intent) and your product’s technical specs.

The Strategy:

Identify subreddits where users discuss the problems your product solves (e.g., r/hiking for ankle fatigue).

The Action:

Instead of dropping links, focus on contributing to the "consensus" of a thread. Use phrases that mirror high-intent queries: "I found that boots with a wider toe box helped with my descent fatigue".

The Goal:

You are providing the "Subjective Data" (user experience) that the AI will later pair with your "Objective Data" (the catalog feed).

3. Managing the "Sentiment Score"

AI models like Gemini and Perplexity perform sentiment analysis on Reddit threads to determine if a product is a "recommended" or "cautionary" mention.

The Strategy:

Proactively address negative sentiment in niche subreddits. An unresolved complaint is a "Negative Truth" signal to an LLM.

The Action:

Provide factual, transparent resolutions. If a product had a version 1.0 flaw that was fixed in 2.0, state that clearly.

The Goal:

The AI will see the correction and can then "reason" that your current inventory (Layer 2) is the improved, validated version.

By executing this Organic Protocol, you ensure that when the AI identifies a recommendation in a thread, it has a "High Trust" signal to trigger the Shoppable AI Loop.

Layer 2: The Structured Data Engine

This is the implementation blueprint for your "hard" signals.

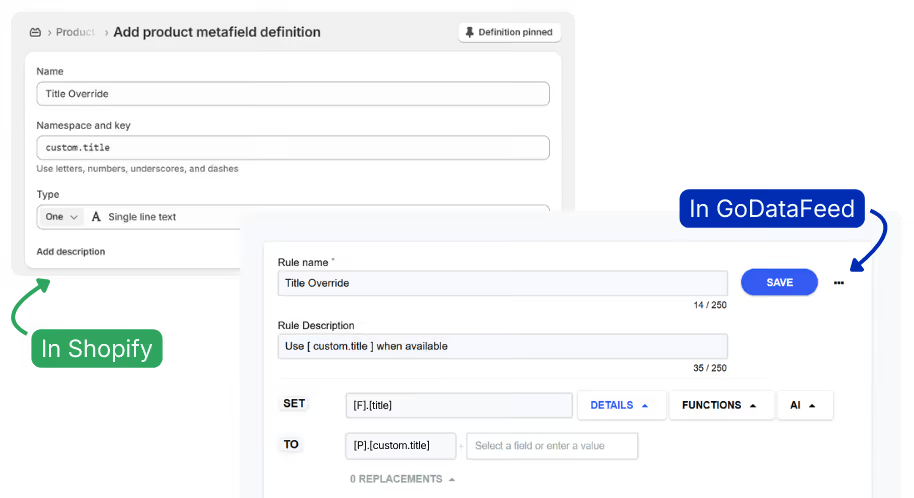

1. The Title Strategy: Semantic over Synthetic

Standard Google Shopping titles use a Brand + Category + Keyword structure.

This is "machine noise" to AI because LLMs prioritize Entity Clarity. They need to know exactly what the object is and who it is for in natural language.

Standard (Bad) Title Example:

UltraGrip 5000 - Men's Hiking Boots - Waterproof - Size 10 - Brown

LLM-Optimized (Good) Title Example:

UltraGrip 5000: Waterproof Men's Mountain Hiking Boots for Technical Terrain

Why?

The optimized version uses a relational descriptor ("for Technical Terrain").

When a user asks Gemini, "What boots should I get for a rocky hike in the rain?", the LLM can semantically link your product to the "rocky" and "technical" intent.

Feed Strategy:

To dominate the synthesis layer of search, you have to evolve from the "Brand + Category + Keyword" structure used in traditional Google Shopping feeds. AI models view that as machine noise.

Instead, your feed strategy must prioritize Entity Clarity and Relational Context.

The Semantic Pivot:

Traditional feeds are optimized for filters. LLM-optimized feeds are built for intent matching.

Put all those additional values and optional metafields to use. For example, use Bullet Points metafields as “relational descriptors” that can be pulled into high value fields like Description.

The Intent Bridge:

By using relational descriptors like "for Technical Terrain" or "for Beginner Backpacker," you allow the LLM to semantically link your product to a user’s situational constraints (e.g., "rocky hike" or "rainy day").

Business Impact:

This moves your product from being a "keyword match" to a "reasoned recommendation," significantly increasing your “share of model” and retrieval probability in AI Overviews.

2. Required Attribute Enhancements

To maximize visibility in AI-generated carousels and citations, you must populate the "Optional" Reddit/Google feed fields that most merchants ignore.

Layer 3: Reddit Dynamic Product Ads

To complete the three-tiers, Layer 3 (DPA) acts as the final nudge.

While Organic builds trust and Structured provides the facts, the Paid layer puts your inventory in front of users where they too come for a source of truth. Reddit. More precisely, topic-precise subreddits.

This requires a well-maintained product catalog and a tight signal loop between your storefront and Reddit's ad delivery system.

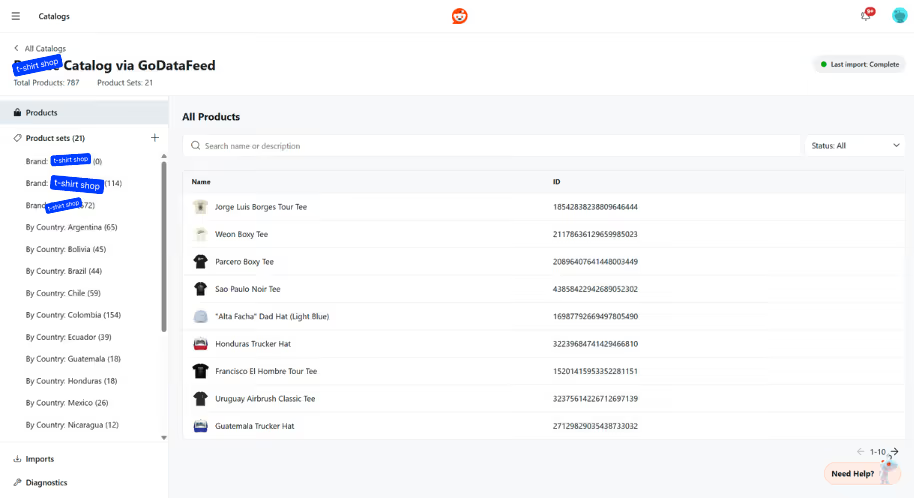

1. Feed Quality as the Foundation of DPA Performance

Reddit's Dynamic Product Ads pull directly from your catalog. The accuracy, completeness, and freshness of that catalog determines which SKUs get served, to whom, and with what creative.

The Technical Requirement:

Your feed must be updated frequently enough that out-of-stock and price-changed items don't reach active ad units. For high-turnover catalogs, hourly fetches are the standard.

The Execution:

Option 1: Use server-side GTM with Reddit's Conversions API to push real-time product events — ViewContent, AddToCart, Purchase — with accurate product metadata attached. This keeps Reddit's delivery system calibrated to what's actually available.

Option 2: If custom API triggers exceed your current dev capacity, a high-frequency scheduled feed fetch achieves most of the same result. A feed management platform like GoDataFeed lets you schedule hourly fetches without custom dev work. Stale data is the primary failure mode for DPA; consistent refresh intervals eliminate it.

The Result:

Ads serve only active, in-stock SKUs at current prices — protecting margin, reducing wasted spend, and keeping the experience credible for a Reddit audience that's already skeptical of advertising.

2. Closing the Intent Loop with DPA

Reddit users research before they buy. The organic layer captures that research behavior. DPA converts it.

The Mechanism:

When a user engages with content in a relevant subreddit — reading a thread, upvoting a recommendation, clicking into a product discussion — Reddit records that intent signal. Your DPA campaign then retargets that user with the specific product category or SKU that matches what they were researching.

The Fulfillment:

The system matches demonstrated interest (Organic) to available inventory (Structured) and serves a product-specific ad unit timed to high purchase intent.

The Advantage:

Unlike top-of-funnel display, DPA on Reddit targets users who've already self-selected into the consideration phase. The organic trust built in Layer 1 reduces the friction that typically kills retargeting performance on other platforms.

3. Catalog Rules That Improve Match Rate and Delivery

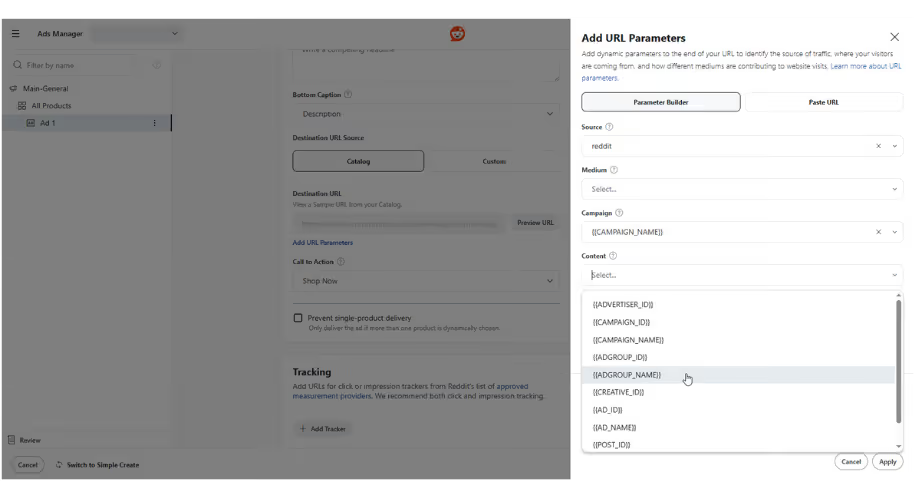

Within Reddit Ads Manager, the same catalog optimization principles from your Structured layer apply — with direct impact on ad performance.

Title Mapping:

DPA creative pulls titles from your feed. LLM-readable, human-first titles — descriptive, relational, free of keyword stuffing — produce higher CTR because they read like recommendations, not retail listings.

Attribute Enrichment:

Pass product_highlights and product_detail fields through your catalog. Reddit's system uses these to match ads to contextually relevant threads and placements, improving both relevance scores and delivery efficiency.

CAPI Signal Integrity:

Every Purchase and AddToCart event sent through the Conversions API trains Reddit's delivery model on what your actual buyers look like. The stronger and cleaner that signal, the more precisely Reddit targets net-new users who match that profile.

By aligning these three layers, your Reddit presence operates as a closed system: organic content earns trust, structured data establishes authority, and paid ads convert the intent both generate — with attribution signals that make the next campaign smarter than the last.

Feeding the Next Generation of Search

Executing this architecture requires precise alignment between your community management and your backend feed rules.

By maintaining an API-driven product feed on Reddit, you aren't just buying ads; you are actively injecting your inventory directly into the context window of the AI models replacing traditional search for high-intent shoppers.

For questions about your Structured Data or "Hard" Signals to Reddit, or to get your free Reddit feed setup, reach out to our team.