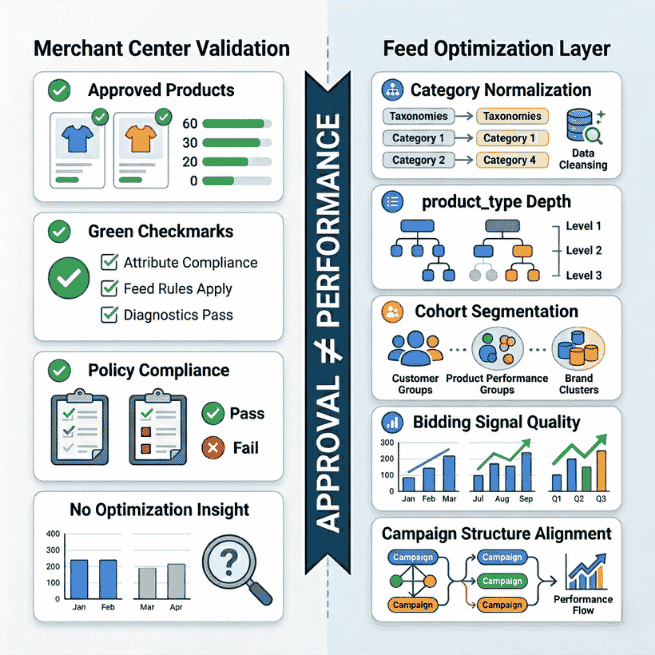

Most catalogs ship with product_type strings two levels deep, three spellings of the same category, and a google_product_category set once and never audited. Approval looks fine. Performance drifts anyway.

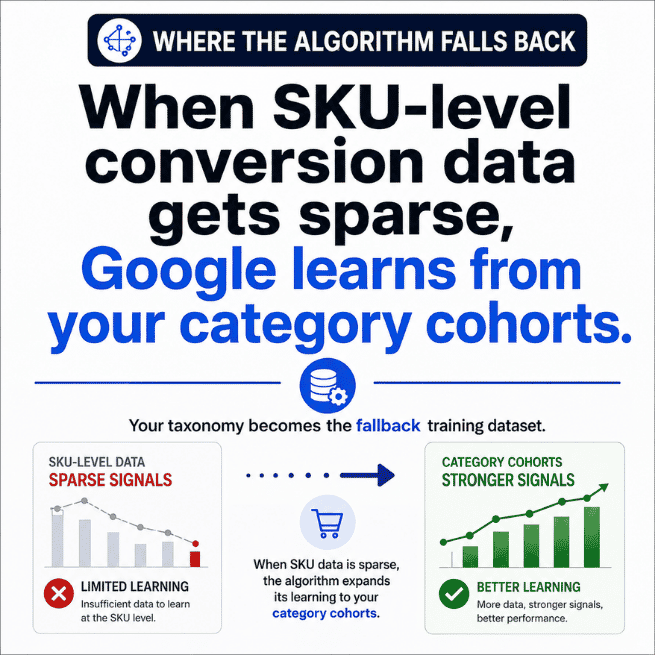

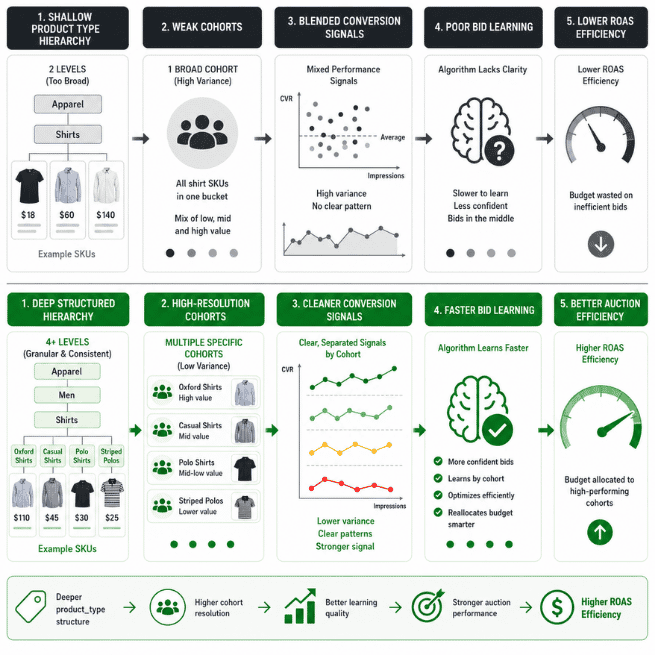

Here's the part most operators miss. When SKU-level conversion data is sparse, the bidding algorithm falls back to the cohorts your category structure defines. Shallow hierarchy means blunt cohorts. And blunt cohorts learn slowly, bid wrong, and quietly cost you margin.

Categorization is a routing layer, not a labeling layer

Stop thinking of google_product_category and product_type as metadata. They're signal infrastructure. GPC is your routing lever. It tells Google which auction pool you compete in. product_type is your segmentation lever. It tells Google how to subdivide that pool into bid groups your algorithm can actually learn against.

Most feed managers treat both as compliance fields and move on once Merchant Center shows green. That's enough for approval. Not enough to compete.

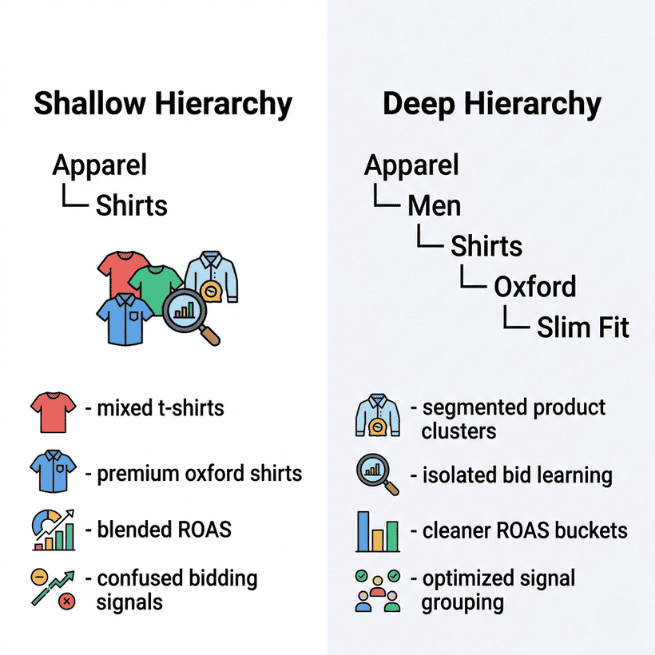

Shallow product_type hierarchies collapse listing-group precision

Here's where this breaks. Listing groups in Performance Max and Standard Shopping can subdivide on any product attribute. Brand, item ID, custom labels, product_type. But most operators default to product_type because it maps to how merchandising actually thinks about the catalog. When that's your primary subdivision lever, depth is the variable that decides how finely you can segment. Two levels of Apparel > Shirts gives you two levels of product_type subdivision. That's not a segmentation strategy. That's a single bucket with a label.

[custom labels for Shopping campaigns]

What goes wrong. A Shirts cohort containing $18 tees and $140 oxfords trains against a blended conversion rate that fits neither product. The algorithm averages them, bids in the middle, and you end up subsidizing your cheap SKUs with budget meant for your premium ones, destroying your Target ROAS efficiency. If you've ever stared at a listing-group report wondering why your premium SKUs aren't pulling their weight, this is usually why.

What you actually want to look at is your Shopping report pivoted by product_type (3rd level) and (4th level). If a meaningful share of impressions are coming from products with those levels empty, your hierarchy is depriving the bidding model of signal density. Fix it by building to a minimum of four levels. Category > Subcategory > Style > Attribute Cohort. Something like Apparel > Men > Shirts > Oxford > Slim Fit. That's the depth that gives PMax something real to subdivide.

One asymmetry worth knowing. This matters more for Shopping and PMax than for Search or Display, because those campaign types subdivide against the hierarchy itself. Depth isn't optional infrastructure. It's the substrate the algorithm learns on.

[Your feed becomes your segmentation engine]

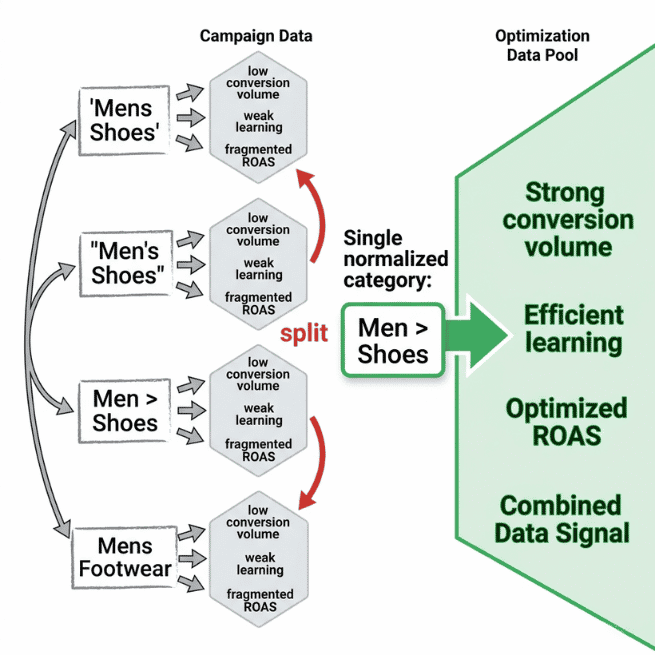

Depth without consistency isn't depth

Now the part that catches people who do build deep hierarchies. Your hierarchy is only as deep as its most fragmented level. If level 2 has Men's Shoes, Mens Shoes, and Men > Shoes floating around, you don't have a clean level 3. You have three level-3s the algorithm can't pool learning across.

This breaks invisibly. product_type is string-matched, not semantically grouped. Whitespace, apostrophes, capitalization, and > delimiter spacing all create distinct branches without firing a single warning in Merchant Center. The feed approves. The campaigns run. The cohorts fragment. [Text replacement rules]

This pattern shows up regularly. A top revenue category with four product_type variants splitting impressions four ways, each pulling a different ROAS, none with enough data to optimize on its own. The team keeps raising bids on the underperformers, not realizing they're bidding against fragments of the same cohort.

Quick diagnostic. Export your full product_type list, dedupe it in a sheet, count distinct strings. If your distinct count is meaningfully higher than your actual category count, double or more, you've got a normalization problem hiding underneath your depth. The fix lives at the feed layer. Controlled vocabulary enforced before the feed hits Merchant Center, either through a supplemental feed with category-mapping rules or through rule-based transformations in your feed tool. This is where GoDataFeed earns its keep for most operators. You get supplemental-style control over a fragmented category column without managing a second feed file by hand.

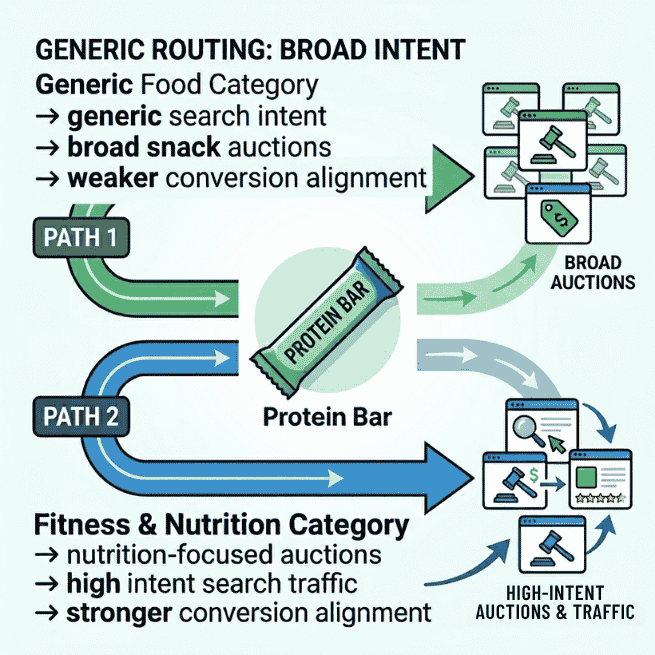

GPC sets the auction pool. Product type segments inside it.

GPC is the upstream routing decision. Tag a protein bar Food, Beverages & Tobacco > Food Items and you compete in generic snack auctions. Tag the same bar Health & Beauty > Health Care > Fitness & Nutrition > Nutrition Bars and you compete for intent-rich nutrition queries.

There's no warning when GPC is too shallow. Products approve, queries just don't match the intent you expected. Pull your search terms report, filter by the campaign or asset group containing the products in question, then check the GPC assignments on those products. If the queries skew generic when you expected specificity, your GPC is too high in the taxonomy. Assign at the deepest applicable node. Google's taxonomy goes deep, five to seven levels in most major verticals. Use them.

GPC gets you into the right auction. Depth determines whether you win it.

Cohort resolution is what your bidding algorithm actually optimizes against

To scale efficiently, Performance Max and Standard Shopping rely on the cohorts your category structure defines.

The chain looks like this. Depth determines cohort resolution. Cohort resolution determines learning quality. Learning quality determines auction performance. Same campaign, same budget, same products, materially different results based on whether your categorization gives the algorithm clean groups to learn against.

Categorization isn't pre-work for campaign strategy. It is campaign strategy, executed at the feed layer.

Where depth logic should live in your feed pipeline

The feed management layer is the realistic answer for most operators. Depth rules, normalization, and category mapping live alongside the rest of your feed transformations, iterate quickly, and don't require merchandising buy-in to ship. Source-catalog work is worth it when your taxonomy is stable and merch will own it long-term. Supplemental feeds make sense when you're running channel-specific depth strategies. Google uses GPC and product_type, Meta runs on its own catalog category logic, Amazon uses its browse node taxonomy entirely. Different ranking signals, different shopper intent, different hierarchies. They shouldn't share one.

For ongoing hygiene, build a monthly audit into your SOP. Pull the product_type distinct-string count, check listing-group impression distribution at levels 3 and 4, and spot-check GPC depth on your top revenue categories. Fifteen minutes a month catches drift before it shows up in your ROAS report eight weeks later.

Bottom line

If you can't articulate, in one sentence, what your product_type hierarchy is segmenting for, your categorization is structural overhead, not strategy. Depth is the variable. Consistency makes it real. GPC routes it. Cohort resolution is what the algorithm falls back on when SKU signal thins out.

Run the audit this week. Whatever you find is what your algorithm has been learning against.